Artificial Intelligence (AI) has emerged as a transformative force across various industries, from healthcare to finance and beyond. However, as AI continues to advance, so do the concerns surrounding the security of AI models. Ensuring the security of AI models is paramount to protect against potential threats and vulnerabilities. In this article, we will explore the key concerns, best practices, and techniques to enhance AI model security.

Concerns Surrounding AI Model Security

- Data Privacy: One of the most significant concerns in AI model security is the privacy of data used to train and fine-tune these models. Sensitive information, when mishandled, can lead to severe breaches and legal consequences. Implementing strong data privacy measures is crucial to address this concern.

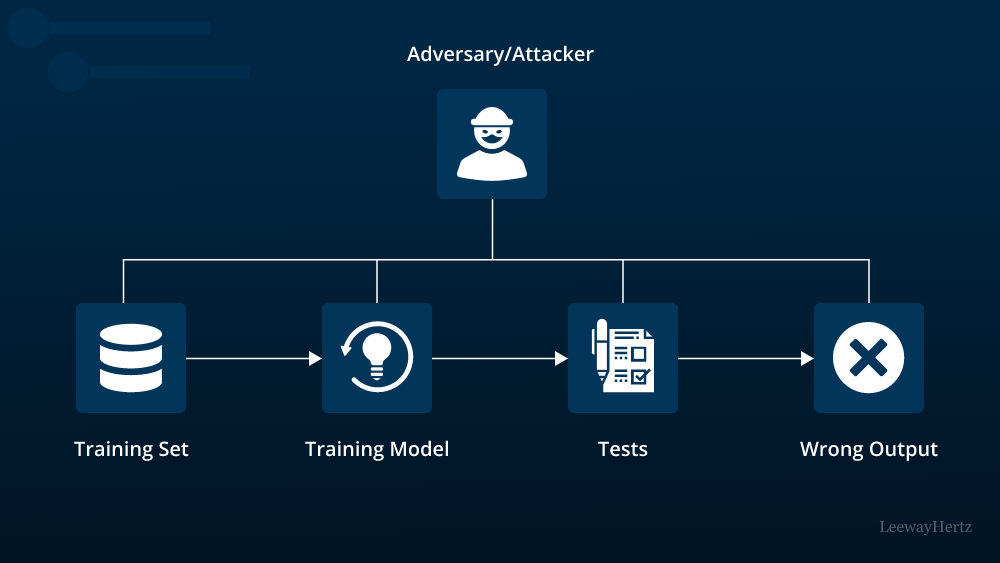

- Adversarial Attacks: AI models are susceptible to adversarial attacks where malicious actors manipulate input data to deceive the model. These attacks can result in incorrect predictions or biased outcomes, potentially causing significant harm.

- Model Stealing: Attackers can attempt to steal AI models by reverse engineering them, which could lead to intellectual property theft and unauthorized use of the model for malicious purposes.

- Model Bias and Fairness: Biases present in training data can lead to biased AI models, impacting fairness and equity. Ensuring that AI models are fair and unbiased is an ongoing concern in the AI community.

- Deployment Vulnerabilities: Deploying AI models in real-world applications can introduce vulnerabilities if not done securely. Unauthorized access to deployed models can lead to data leaks and misuse.

Best Practices for AI Model Security

- Data Encryption and Access Control: Employ robust encryption methods to protect data both at rest and in transit. Implement strict access control mechanisms to limit who can access sensitive data.

- Regular Audits and Monitoring: Continuously monitor AI models for anomalies and potential threats. Regular audits of model performance and security can help detect and mitigate issues promptly.

- Robust Authentication: Implement strong authentication methods to prevent unauthorized access to AI models and their associated resources.

- Model Validation: Validate input data to detect potential adversarial attacks. Techniques like input normalization and anomaly detection can help identify malicious inputs.

- Federated Learning: Consider using federated learning, which allows models to be trained on decentralized data sources without sharing the data itself. This approach enhances privacy and security.

Techniques for Enhancing AI Model Security

- Differential Privacy: Incorporate differential privacy techniques to protect individual data points during model training. This approach adds noise to the training data, making it more challenging for attackers to extract sensitive information.

- Adversarial Training: Train AI models to be robust against adversarial attacks by incorporating adversarial examples into the training data. This helps the model learn to recognize and defend against such attacks.

- Model Watermarking: Embed unique watermarks into AI models to detect unauthorized copies or usage. This can deter potential model theft.

- Ethical AI Guidelines: Adhere to ethical AI guidelines and standards to mitigate biases in AI models and ensure fairness in predictions.

- Regular Updates and Patching: Keep AI models and their dependencies up to date with the latest security patches and updates to address known vulnerabilities.

- Red Teaming: Employ ethical hacking practices by conducting red team exercises to identify weaknesses in AI model security. This proactive approach helps uncover potential vulnerabilities.

In conclusion, as AI becomes increasingly integrated into our daily lives, the security of AI models must be a top priority. Addressing concerns related to data privacy, adversarial attacks, model stealing, bias, and deployment vulnerabilities is essential. By following best practices such as data encryption, regular audits, and robust authentication, and incorporating advanced techniques like differential privacy and adversarial training, organizations can enhance the security of their AI models. The evolving landscape of AI security requires continuous vigilance and adaptation to stay ahead of emerging threats and protect both data and the integrity of AI systems.