In the ever-evolving landscape of artificial intelligence, “foundation models” have emerged as the bedrock upon which countless AI applications are built. These models, characterized by their vast size, impressive performance, and versatility, have revolutionized the field of AI, enabling advancements in natural language processing, computer vision, and beyond. In this article, we will delve into the world of foundation models, exploring their significance, applications, and the challenges they present.

Understanding Foundation Models

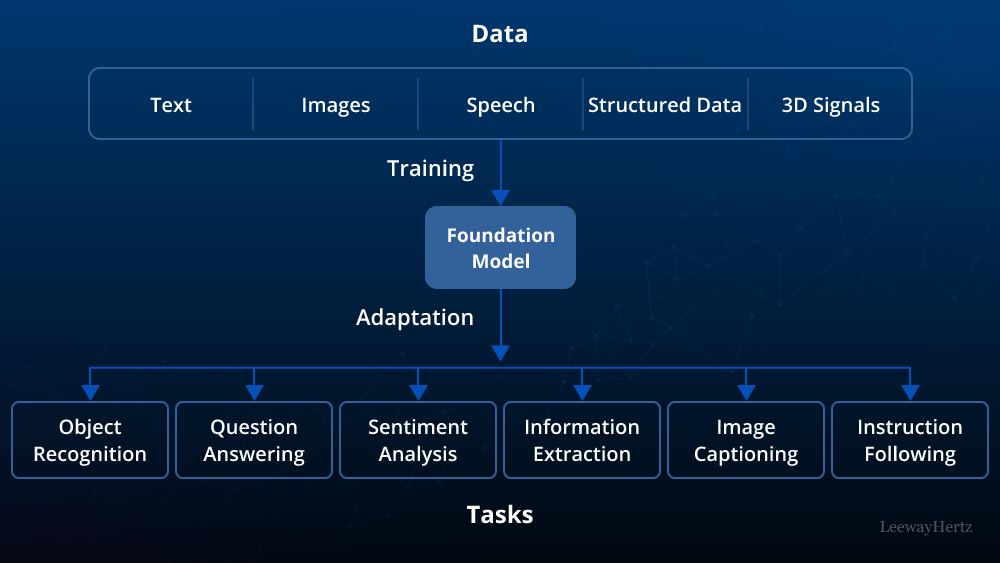

Foundation models, often referred to as pre-trained models, are the culmination of years of research and development in the field of deep learning. They are massive neural networks, composed of billions of parameters, that have been trained on vast datasets containing text, images, or a combination of both. The primary goal of training these models is to enable them to understand and generate human-like language or recognize patterns in images with a high degree of accuracy.

One of the most famous foundation models is OpenAI’s GPT-3 (Generative Pre-trained Transformer 3), which boasts 175 billion parameters and has demonstrated remarkable capabilities in natural language understanding and generation. Similarly, models like BERT (Bidirectional Encoder Representations from Transformers) have made significant contributions to the field of natural language processing.

Applications of Foundation Models

Foundation models have found applications across a wide range of industries, transforming the way we interact with technology and data. Some key applications include:

- Natural Language Processing (NLP): Foundation models have greatly enhanced language understanding and generation tasks. They are used in chatbots, virtual assistants, and machine translation systems to provide more natural and contextually relevant responses to user queries.

- Content Generation: These models are used to generate human-like text for content creation, including news articles, marketing copy, and creative writing. They can automate content production at scale.

- Recommendation Systems: By analyzing user behavior and preferences, foundation models power recommendation systems for platforms like Netflix, Amazon, and Spotify, leading to more personalized user experiences.

- Image Recognition: In computer vision, models like Vision Transformer (ViT) have made substantial strides in image recognition, object detection, and image generation, enabling applications in self-driving cars, medical imaging, and more.

- Healthcare: Foundation models aid in medical image analysis, disease diagnosis, and drug discovery by sifting through vast amounts of medical data and identifying patterns and anomalies.

- Finance: In the financial industry, these models are employed for risk assessment, fraud detection, and automated trading strategies by analyzing vast amounts of financial data.

- Education: AI-driven educational platforms use foundation models to personalize learning experiences, adapt content to individual student needs, and provide instant feedback.

- Legal: In the legal sector, foundation models assist in legal research, contract analysis, and predicting case outcomes by parsing and summarizing large volumes of legal texts.

Challenges and Ethical Concerns

While foundation models hold immense potential, they are not without their challenges and ethical concerns. Some of these include:

- Data Bias: Models trained on biased data can perpetuate and amplify existing biases, leading to unfair or discriminatory outcomes. Efforts to mitigate bias in training data are crucial.

- Environmental Impact: Training large foundation models requires substantial computational resources, leading to a significant carbon footprint. Researchers are working on making AI training more energy-efficient.

- Privacy: The use of foundation models in generating human-like text raises concerns about misinformation and deepfake content. Stricter ethical guidelines are needed to address these concerns.

- Accessibility: Access to the immense computational resources required to train and fine-tune these models is limited, potentially exacerbating inequalities in AI research and development.

- Regulation: The rapid adoption of foundation models has prompted calls for increased regulation to ensure responsible AI deployment and protect against misuse.

The Future of Foundation Models

The evolution of foundation models is far from over. Researchers are continually pushing the boundaries by developing even larger and more capable models. The concept of “scaling laws” suggests that as models grow in size, their performance continues to improve, opening up new possibilities in AI.

In the future, we can expect foundation models to become more specialized for specific domains, allowing for even greater accuracy and efficiency in tasks like medical diagnosis, scientific research, and more. Additionally, advances in model compression techniques will make it possible to deploy these powerful models on resource-constrained devices, increasing their accessibility.

In conclusion, foundation models are the cornerstone of modern artificial intelligence, enabling groundbreaking advancements across numerous domains. While they offer immense potential, it is crucial to address the ethical concerns and challenges associated with their use. As we move forward, responsible development and deployment of foundation models will be key to harnessing their transformative power for the benefit of society.