In an age where Artificial Intelligence (AI) is becoming increasingly integrated into our daily lives, understanding the inner workings of these AI systems is becoming more important than ever. One critical aspect of AI that has gained significant attention is “Explainable AI” or “XAI.” This innovative field of AI research is poised to bridge the gap between the complex, often opaque, AI algorithms and human comprehension. In this article, we delve into the concept of Explainable AI, its significance, and the role it plays in making AI systems more transparent and trustworthy.

The Rise of Complex AI

AI has come a long way since its inception. From Siri and Alexa assisting us in our homes to self-driving cars navigating the streets, AI applications have made remarkable strides. However, as AI systems have become increasingly sophisticated, they have also become more complex and challenging to interpret. This complexity raises important questions about how these AI systems make decisions and whether we can trust those decisions.

Consider the scenario of a loan approval algorithm used by a bank. When an applicant is denied a loan, they have every right to know why. If the decision is made by a complex AI model, understanding the reason behind the denial can be a significant challenge. This lack of transparency in AI decision-making can lead to a lack of trust, potential bias, and even legal and ethical concerns.

What Is Explainable AI (XAI)?

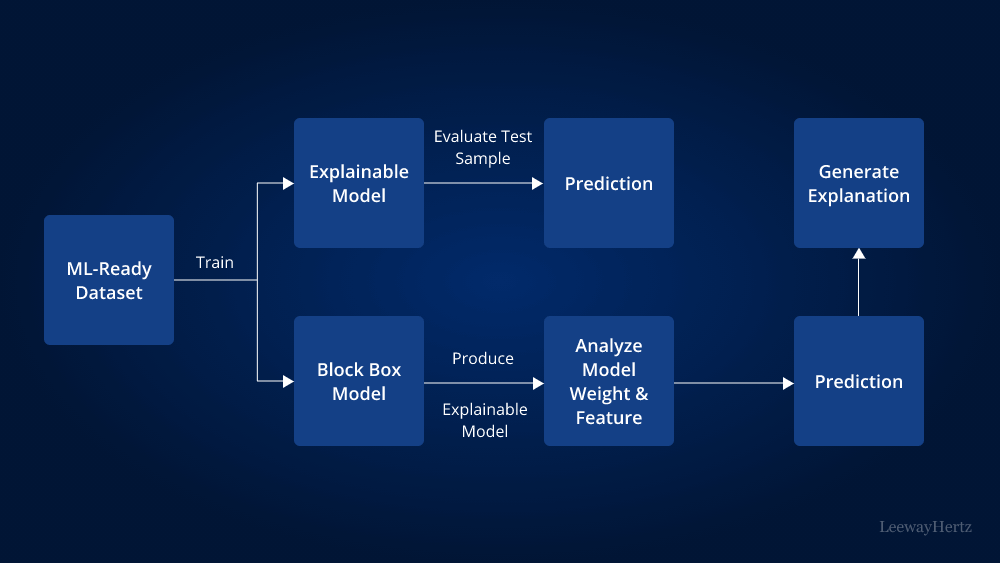

Explainable AI, often abbreviated as XAI, is an emerging field in artificial intelligence that aims to make AI systems more transparent, interpretable, and understandable to humans. XAI seeks to answer the critical question: “How and why did the AI make this decision?” It’s about demystifying the black box of AI and providing insights into its inner workings.

XAI techniques allow us to understand the reasoning behind AI decisions, making it possible to identify biases, errors, and potential issues in AI models. These techniques enable humans to interact with AI systems more effectively, ensuring that decisions made by AI are not only accurate but also fair and accountable.

Significance of Explainable AI

- Building Trust: Trust is a fundamental factor in the adoption of AI technologies. By providing explanations for AI decisions, XAI helps build trust among users and stakeholders. This is crucial in domains like healthcare, finance, and autonomous vehicles, where AI systems make critical decisions that affect human lives.

- Ethical AI: Ensuring that AI systems are fair and unbiased is a pressing concern. XAI tools can help identify and rectify bias in AI models, promoting fairness and equity. This is particularly important when AI systems are used in sensitive areas like hiring or criminal justice.

- Legal Compliance: Many industries are subject to regulations that require explanations for decisions made by AI systems. XAI can help organizations meet these regulatory requirements and avoid legal challenges.

- Enhanced Human-AI Collaboration: In scenarios where AI and humans need to work together, such as in medical diagnoses or scientific research, XAI can facilitate better collaboration. Doctors and researchers can trust AI recommendations when they understand the reasoning behind them.

Methods of Achieving Explainable AI

Several techniques are used to make AI systems explainable:

- Feature Importance Analysis: This method identifies which features or variables had the most significant influence on a particular AI decision. For instance, in a credit scoring model, it can explain why an applicant was denied credit.

- Local Interpretability: This approach focuses on explaining individual predictions. Techniques like LIME (Local Interpretable Model-agnostic Explanations) generate simple, interpretable models to explain the behavior of a specific prediction.

- Model-Agnostic Approaches: These techniques can be applied to any AI model, regardless of its underlying architecture. They include methods like SHAP (SHapley Additive exPlanations) and Integrated Gradients.

- Rule-Based Systems: Some XAI methods create rule-based systems that generate explanations in the form of “if-then” rules. These rules provide transparent insights into the AI’s decision-making process.

Challenges and Future Directions

While XAI is making significant strides, it still faces challenges. Achieving a balance between transparency and model performance remains a key challenge. Extremely interpretable models may not perform as well as complex, opaque models. Striking the right balance is essential.

Furthermore, XAI is an active area of research, and ongoing efforts aim to develop more robust and widely applicable techniques. As AI systems become even more sophisticated, the need for explainability will continue to grow.

In the future, we can expect to see greater integration of XAI into AI development pipelines, with tools and libraries that make it easier for developers to incorporate explainability into their models. This will not only benefit businesses and organizations but also empower individuals to make informed decisions in an AI-driven world.

Conclusion

Explainable AI, or XAI, is a critical development in the field of artificial intelligence. It addresses the need for transparency, accountability, and trust in AI systems, making them more understandable and interpretable to humans. By providing explanations for AI decisions, XAI not only enhances trust but also helps identify and mitigate bias, ensures legal compliance, and facilitates collaboration between humans and AI.

As AI continues to permeate various aspects of our lives, the importance of XAI cannot be overstated. It represents a significant step forward in making AI systems not just powerful but also ethical and accountable tools that benefit society as a whole. As we move forward, the pursuit of explainable AI will be instrumental in shaping the future of AI technology.